Home > Blog > ETA Accuracy in Field Service: Why ETAs Require Optimization to Work

Field ServiceETA Accuracy in Field Service: Why ETAs Require Optimization to Work

How accurate are your ETAs? Do clients find them useless? Learn why ETA accuracy breaks down without optimization and how to fix it in your field service.

ON THIS PAGE

ETA accuracy in field service is one of those metrics that gets better every year on paper while SLA compliance stays frustratingly flat.

Your GPS data is sharper, your traffic feeds are richer, and your ETA models are more sophisticated than they were three years ago.

And yet, missed compliance windows, reactive replanning, and frustrated customers still dominate your operations meetings.

This disconnect is a design problem more than a data quality problem.

What we mean by that is that accurate ETAs tell you what's going to happen with greater precision, but they don't change what actually happens.

Simply put:

Accurate ETAs are solid forecasts that expose how broken your field service execution is.

But making them more accurate isn't going to fix the problem for you.

That's why in this article we're going to why focusing on ETA accuracy stalls at scale and what execution-first operations do differently.

So if you're running 50+ technicians with SLA obligations, same-day volatility, and sending ETAs to customers or internal teams, this distinction will feel familiar.

Here's a quick overview of the key takeaways from this guide:

Key Takeaways

- ETAs are predictions that reflect the current plan under current assumptions. They're only valid until conditions change, which in live field operations happens constantly.

- ETA accuracy drifts because of reactive callouts, failed visits, and SLA overrides. You can't prevent these delays with more accurate GPS systems because the underlying technology doesn't solve the execution problem.

- Better ETA accuracy doesn't prevent failed visits, jobs, or work orders. Receiving an ETA notification doesn't stop a technician will miss an SLA 30 minutes, it only gives you a tighter window in which you need to react.

- Field service management software and telematics systems can track jobs and locations individually, but they lack real-time ETA updates to adjust for accuracy or cross-route optimization logic to respond to the drift.

- Execution optimization is the missing layer that ensures accurate ETAs in high-maturity field services operations, which also relies on ETAs as triggers for dynamic replanning.

What Is an ETA in Field Service Operations? And What Does It Actually Represent?

An estimated time of arrival (ETA) in field service operations is a forecast of the time when a technician will arrive at a job site given the current location, route sequence, service time, traffic conditions, or priority order of jobs ahead of it.

Every one of those inputs is a snapshot that determine how accurate an ETA is. They're accurate at the moment the ETA is calculated and start to decay immediately when those assumptions start to change.

Traffic shifts. A job takes 20 minutes longer than expected. A technician can't get access to a site. Each change invalidates the original ETA.

Simply put:

An ETA is a prediction that tells you what the planned arrival time implies, not the actual time of arrival that the operational reality will impose.

This distinction matters in field operations because customers and internal stakeholders often treat ETAs as commitments.

For example:

- When a customer sees a 2:00 PM arrival time, they clear their afternoon.

- When an operations manager sees an ETA within the SLA window, they move on to the next problem.

(Both are trusting a forecast that's only as reliable as the conditions it was built on.)

At scale, this gap compounds.

With 50+ technicians running multi-job days, even small deviations from plan ripple across every subsequent stop.

The ETA for job six is the accumulated error time of jobs one through five.

Why ETAs Drift in Live Field Operations

ETA drift is a predictable consequence of execution conditions changing throughout the working day.

The question isn't whether your ETAs will drift, but how quickly and how far.

The primary causes of drift in field service are familiar to anyone running live operations:

- Reactive callouts that displace planned work, pushing scheduled jobs later in the day

- Failed first visits that require rescheduling into an already-full route

- SLA priority overrides that protect one high-priority job by pushing three lower-priority jobs back

- Technician delays from access issues, longer-than-expected service times, or traffic that the model didn't anticipate

Each individual event is small and manageable in isolation.

The problem is the cascade of these events:

A 15-minute overrun at job two pushes job three by 15 minutes, which pushes the lunch-adjacent job four into a tighter window, which makes the compliance-critical job five a coin flip.

ETAs drift because execution conditions drift. The ETA at that point is accurately reflecting a plan that's no longer valid.

And that's the core tension here:

The number on screen is technically correct, but the plan behind it has already broken.

More Accurate ETAs Is a False Sense of Comfort

When SLAs slip and customers complain about arrival times, the instinctive response is to improve ETA accuracy.

Most field service companies tend to invest in:

→ Better telematics and GPS

→ Better traffic data

→ Better models

All of this makes sense as an investment. But it's also the wrong one to make in isolation because all you're getting is greater operational visibility and forecasting.

Essentially:

More accurate ETAs deliver faster, more precise notification that a deadline will be missed. They don't prevent the miss itself.

You find out at 1:15 PM instead of 1:45 PM that your 2:00 PM SLA is at risk, but the outcome is the same: a planner scrambles to manually reshuffle the route, or the SLA breaks.

Knowing you'll miss the SLA sooner doesn't prevent missing it.

It shortens the window in which you can act, which is helpful, but it doesn't act for you.

The distinction is between prediction improvement (better data, better models) and execution improvement (dynamic routing, cross-job replanning).

Here's what that looks like in practice:

| Investment | What it improves | What it doesn't change | Value to COO | Value to CFO |

|---|---|---|---|---|

| Better GPS / traffic data | Position accuracy, travel time estimates | Route sequence, job priority logic | More precise ETA notifications | No cost reduction |

| Better ETA modeling | Forecast reliability | Operational response to drift | Earlier awareness of SLA risk | No margin improvement |

| Dynamic routing | Route sequence adjusted in real time | Historical data quality | Fewer SLA breaches, less replanning | Reduced emergency costs |

| Cross-job replanning | Trade-offs across the full route | Underlying demand volatility | Planner capacity freed up | Lower cost per job completed |

This mistake is understandable.

ETAs are visible and measurable, so they attract investment.

Execution gaps are systemic and harder to quantify.

But the result is the same:

Field service organizations spend more to predict failure with greater precision while the failure itself continues.

Where ETAs Actually Break First in Your Operations

Standalone ETAs lose reliability fastest in exactly those conditions in which your operation probably runs every day:

- Multi-job days: A 10-minute slip at job two becomes a 40-minute miss by job six, because each downstream job inherits every delay that came before it.

- Compliance windows: When a job has a hard arrival deadline, there's no buffer to absorb drift without triggering a replan.

- High-density routes: Many jobs in a tight geographic area means one delay touches more jobs, faster.

- Same-day change: This breaks the sequencing logic original ETAs were built on. A reactive callout inserted at 10:30 AM invalidates every ETA calculated at 7:00 AM.

The simple truth is:

The more complex the day, the less useful ETAs become.

Complexity is where ETAs need optimization backing them, not just more accurate data.

In fact:

Nearly half (46%) of field service companies still struggle to meet SLAs, according to IFS's State of Service research.

That's a systemic execution gap, which has nothing to do with forecasting.

Why FSM and Telematics Can't Fix On-Time Execution

FSM and telematics systems are the right tools for what they're designed to do:

- FSM platforms manage work orders, track job status, and maintain the system of record.

- Telematics gives you live vehicle location, driving behavior, and current-position ETAs.

Both are essential.

But neither is built to optimize field service operations.

The architectural gap is straightforward:

FSM systems are job-centric. They calculate ETAs for individual jobs, one at a time, without the cross-job trade-off logic needed to ask:

Should we resequence this entire route to protect the most time-critical job?

Telematics gives you an accurate picture of where a technician is right now and when they'll arrive at the next stop. But it doesn't decide what should change when that arrival time puts an SLA at risk.

It doesn't adjust your plan, only reports on the position of your vehicles and technicians.

What's missing from both systems is system-initiated replanning.

Here's what we mean:

When an ETA degrades past a threshold, neither FSM nor telematics triggers a resequencing action. They make the problem visible to your operations managers, but they don't resolve it for them.

Solving the execution problem requires optimization logic that evaluates trade-offs across the full day's work.

That's not the same as rescheduling the next job in the queue.

To clarify:

FSM and CAFM are systems of record.

Telematics is the visibility layer.

Execution optimization is the missing layer between plan and outcome.

All three are essential for running field service operations at scale. But only the execution layer can automate operations planning and let you dynamically manage field services.

So you actually reduce costs, instead of piling them on.

The Cost of Improving ETA Accuracy Instead of Execution

When ETAs improve but execution doesn't, costs compound across several dimensions:

- Planner overload from manual replanning triggered by ETA alerts that have no automated resolution pathway. Every alert requires a human decision, and at scale, those decisions pile up.

- SLA firefighting that consumes senior operations capacity daily. Your best people spend their time reacting to today's ETA problems instead of improving tomorrow's execution logic.

- Margin erosion from inefficient routing, emergency rescheduling, and duplicate visits. A technician dispatched to a failed visit that could have been rerouted earlier is a direct cost hit.

- Delayed root-cause fixes because the team never gets ahead of the cycle. When every day is spent managing drift, there's no capacity to address why the drift happens.

Compliance failures rarely show up fully in penalty invoices.

The hidden operational cost to you includes:

- Planner hours

- Fuel consumption

- Technician overtime

- Customer churn

All of these typically run significantly higher than the direct penalty.

Optimizing ETAs treats symptoms, and the cost of symptomatic treatment compounds at scale.

What ETAs Look Like in Optimized Field Service Operations

In operations with high execution maturity, ETAs play a different role.

ETAs are inputs to execution decisions.

The capabilities that define this model look like this:

→ ETAs are recalculated continuously as conditions change throughout the day. (Not just at the start of the route.)

→ When an ETA indicates an SLA is at risk, the system evaluates trade-off options across the full route and adjacent technicians. (Not just the affected job.)

→ Replanning is system-driven. This reduces the cognitive load on operations teams and speeds up response time.

→ Customers and internal stakeholders receive updated ETAs that reflect the revised plan. (Not the ETA that's no longer valid.)

Simply put:

ETAs trigger optimization in high-maturity operations. Not apologies.

This isn't about eliminating uncertainty, because field service will always involve unpredictable conditions.

The difference is whether your operation absorbs volatility before it reaches the customer or passes it through as a missed window and a reactive phone call.

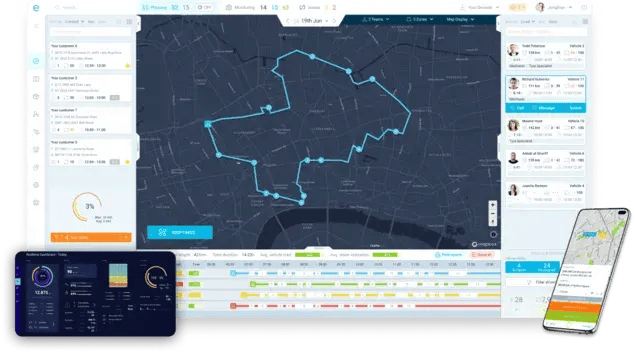

How eLogii Uses ETAs as Data to Improve Their Accuracy and Field Operations

eLogii is the execution layer that sits between your FSM or CAFM system and your field teams. It doesn't replace either. It's the optimization engine that translates plans into protected outcomes.

In practical terms:

When ETAs degrade, eLogii initiates dynamic routing and replanning across the affected route, evaluating cross-job trade-offs and SLA priority logic automatically.

Reactive callouts, failed visits, and technician delays are rerouted within the existing plan rather than escalated to a planner for manual intervention.

eLogii works alongside FSM and telematics, using their data and extending their capability into execution control.

Our software absorbs same-day volatility so that the disruption is handled by the system. Not by your operations team under pressure.

The principle is simple:

Prediction tells you the problem. Optimization resolves it.

eLogii provides the resolution layer. And in doing so, improves ETA accuracy.

What Type of Field Services Should Use This

This argument applies to operations where execution complexity creates meaningful ETA drift. Here's how to know if that's you.

This matters for:

- Operations with contractual or regulatory SLA obligations and real penalties for non-compliance

- Teams running 50+ technicians with multi-job days, same-day volatility, and concurrent compliance-critical work

- Organizations where ETAs are surfaced externally to customers or internally to other teams who make decisions based on them

This doesn't matter (yet) for:

- Static route operations with predictable, low-variance work

- Small teams where a single dispatcher can manage replanning manually without meaningful overhead

- Operations where ETAs are tracked internally but not acted on by customers or downstream processes

If you're in the second group, you'll likely get here eventually as you scale.

But right now, the execution gap between prediction and outcome isn't costing you enough to prioritize.

The Bottom Line

ETA accuracy in field service matters. But it's a means, not an end.

Treating better predictions as the solution to operational problems is one of the most common and most expensive misallocations in field service technology investment.

The pattern is consistent:

→ Organizations invest in more accurate ETAs

→ ETAs get better

→ SLAs keep breaking.

What breaks this pattern is execution optimization that responds when forecasts degrade.

So if your operation provides ETAs to customers, the question to ask isn't:

How accurate are our ETAs?

It's:

What happens when our ETAs show we're going to miss?

If the answer is a planner scrambles, there's a structural gap between your prediction capability and your execution capability.

See how execution-first teams use ETAs correctly and optimization-driven field operations to understand what closing that gap looks like in practice.

FAQ about ETA Accuracy in Field Services

What is ETA accuracy in field service, and why does it matter?

ETA accuracy measures how closely a predicted technician arrival time matches the actual arrival. It's directly tied to SLA compliance and customer experience - when ETAs are unreliable, customers lose trust and compliance windows get missed. Accuracy matters, but it's only useful when paired with execution capability that can respond when conditions change.

Why do ETAs keep breaking even after investing in better routing software?

Better routing software improves the initial plan, but most tools calculate routes once and stop there. When conditions change mid-day, the plan doesn't adapt. Fixing that requires dynamic routing that adjusts in real time - not just a more accurate starting point.

What causes ETAs to drift during a field service day?

Reactive callouts, failed first visits, SLA priority overrides, and technician delays are the primary drivers. Each event is manageable alone, but they cascade across multi-job routes. A 10-minute delay at job two can become a 40-minute SLA breach by job six as every downstream ETA absorbs the cumulative error.

How is dynamic routing different from simply recalculating ETAs?

Recalculating ETAs updates the forecast without changing the plan. Dynamic routing makes active sequencing and trade-off decisions across the full route, reordering jobs, reassigning technicians, and protecting high-priority SLAs. One tells you the updated prediction; the other changes the execution to improve the outcome.

What should you look for when evaluating field service optimization tools?

Focus on execution response capability over ETA visibility. Evaluate whether the tool can replan across multiple jobs and technicians simultaneously, handle SLA-aware routing logic, and absorb same-day changes without manual intervention. The key test: when an ETA degrades past a threshold, does the system act on it or just report it?